Using root

Using the root account in local environments is ok.

Try not to use it on production envs, thought.

You can choose which pipeline to run in your project’s configuration file.

See this page for more details.

You’ll need a MySQL user with the ability to create databases.

You can either use a UI such as PhpMyAdmin, or the mysql console:

CREATE USER 'user'@'localhost' IDENTIFIED BY 'password';

GRANT SELECT,INSERT,UPDATE,DELETE,CREATE,DROP ON *.* TO 'user'@'localhost';

FLUSH PRIVILEGES;

Remember to replace user, localhost and password with the correct values.

Using root

Using the root account in local environments is ok.

Try not to use it on production envs, thought.

Once your account is created, fill the [MYSQL] section in Crowl’s config.ini file.

You should be all set.

Simply launch a crawl and Crowl will:

foo_20180921-123000.urls table in this database, which will contain all data about crawled URLs.--links option, Crowl will also create a links table to store links.Once connected to PhpMyAdmin, simply select the right database and table. At the bottom of the list you’ll find an Export option in the Query result operations box:

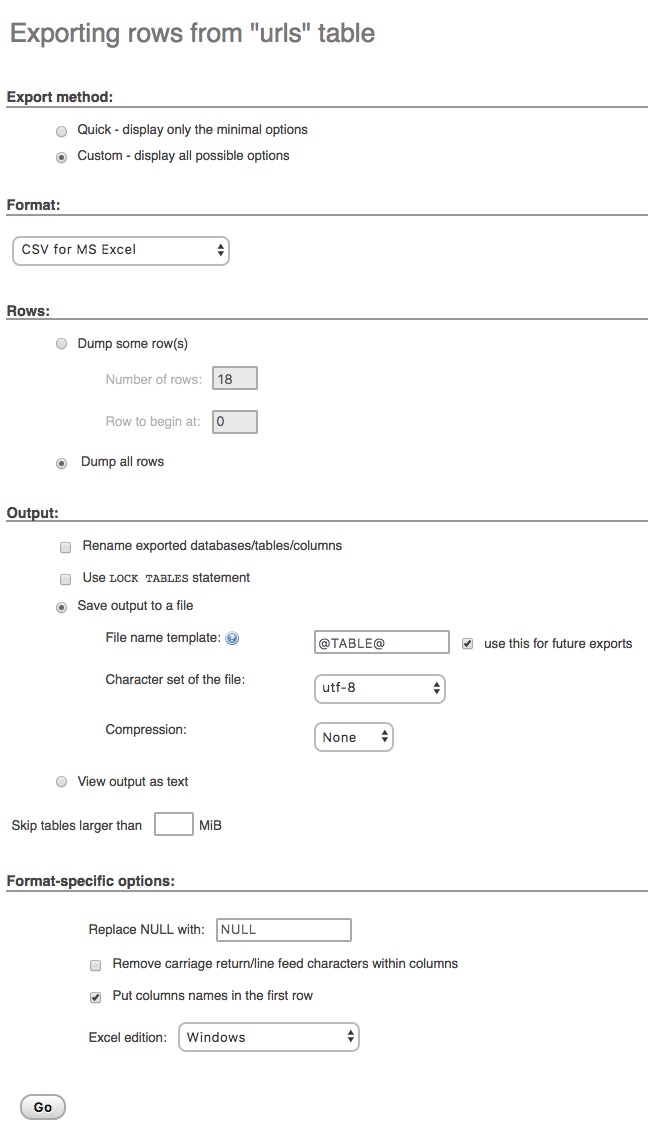

This will enable you to export all the data from your query, and customise the export format.

For example, here is how to download a CSV file with the correct format to import to Microsoft Excel:

There are several ways to export data from MySQL using the console.

The method we find the easiest is to export to a tab-separated file.

Here is how to export data from the urls table:

echo "SELECT * FROM urls;" | mysql -u user -p database > urls.tsv

And for the links table:

echo "SELECT * FROM links;" | mysql -u user -p database > links.tsv

Remember to replace user and database with the correct values. You can also use a custom SELECT statement, as well as another export name.

Count URLs per response code:

SELECT response_code,COUNT(*) FROM urls GROUP BY response_code;

Count URLs per depth level:

SELECT level,COUNT(*) FROM urls GROUP BY level;

Get a list of URLs sending a 50* response code:

SELECT * FROM urls WHERE response_code >= 500;

Get a list of links to 404s (you obviously have to store links for this to work):

SELECT links.*,urls.response_code FROM links LEFT JOIN urls ON links.target = urls.url WHERE urls.response_code = 404;